Large Language Models (LLMs)

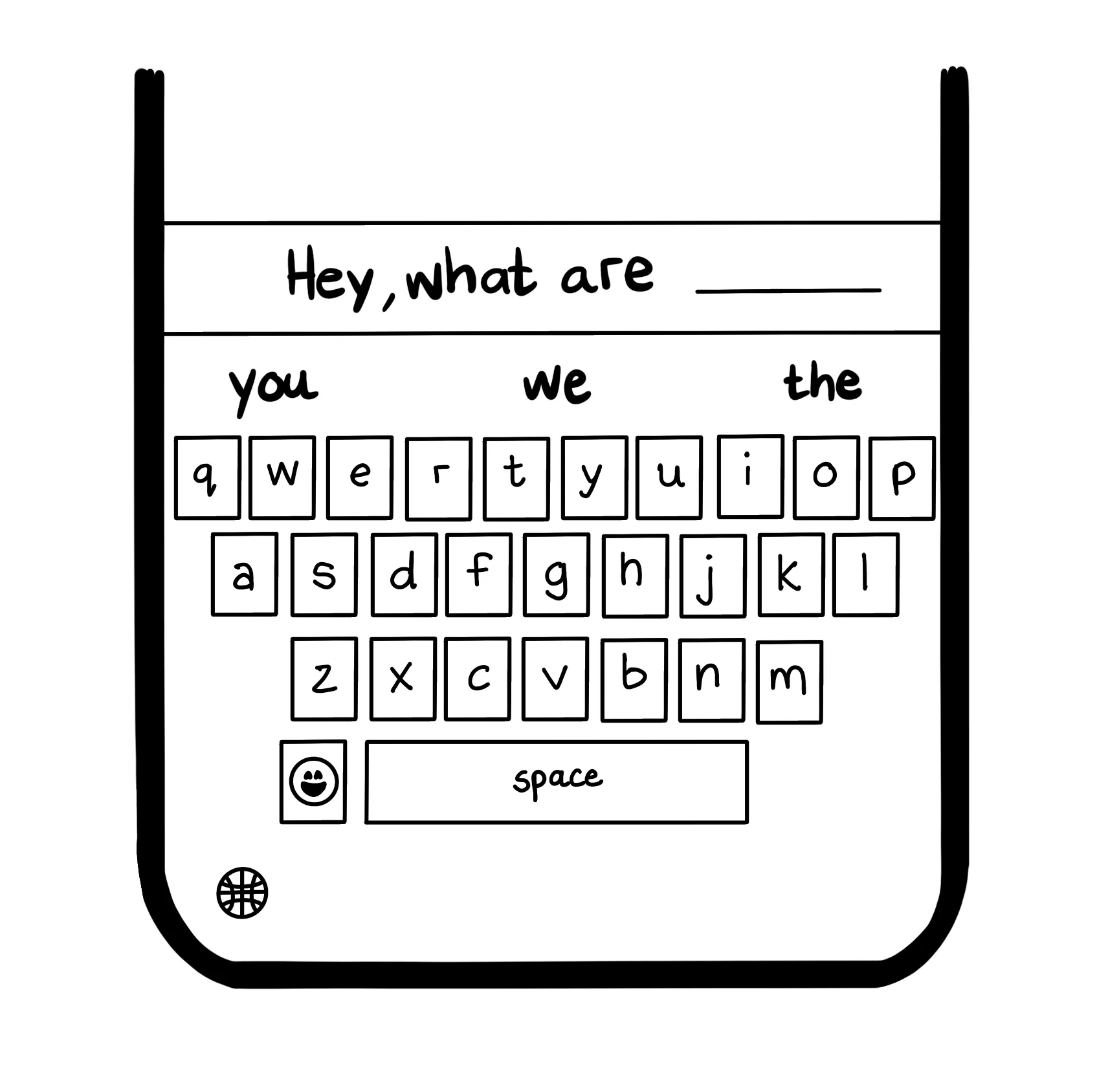

Large language models (LLMs) are machine learning models specialized for natural language problems such as text generation, summarization, translation, and question answering. At their core, they learn to predict what token should come next given the context so far. Consider the autocomplete feature on your mobile device’s keyboard, shown below. When you start typing “Hey, what are…”, the keyboard likely predicts that the next word is “you”, “we”, or “the”, because these are common continuations of that phrase. In miniature, that is what language modeling looks like: use patterns from training data to estimate the next likely token.

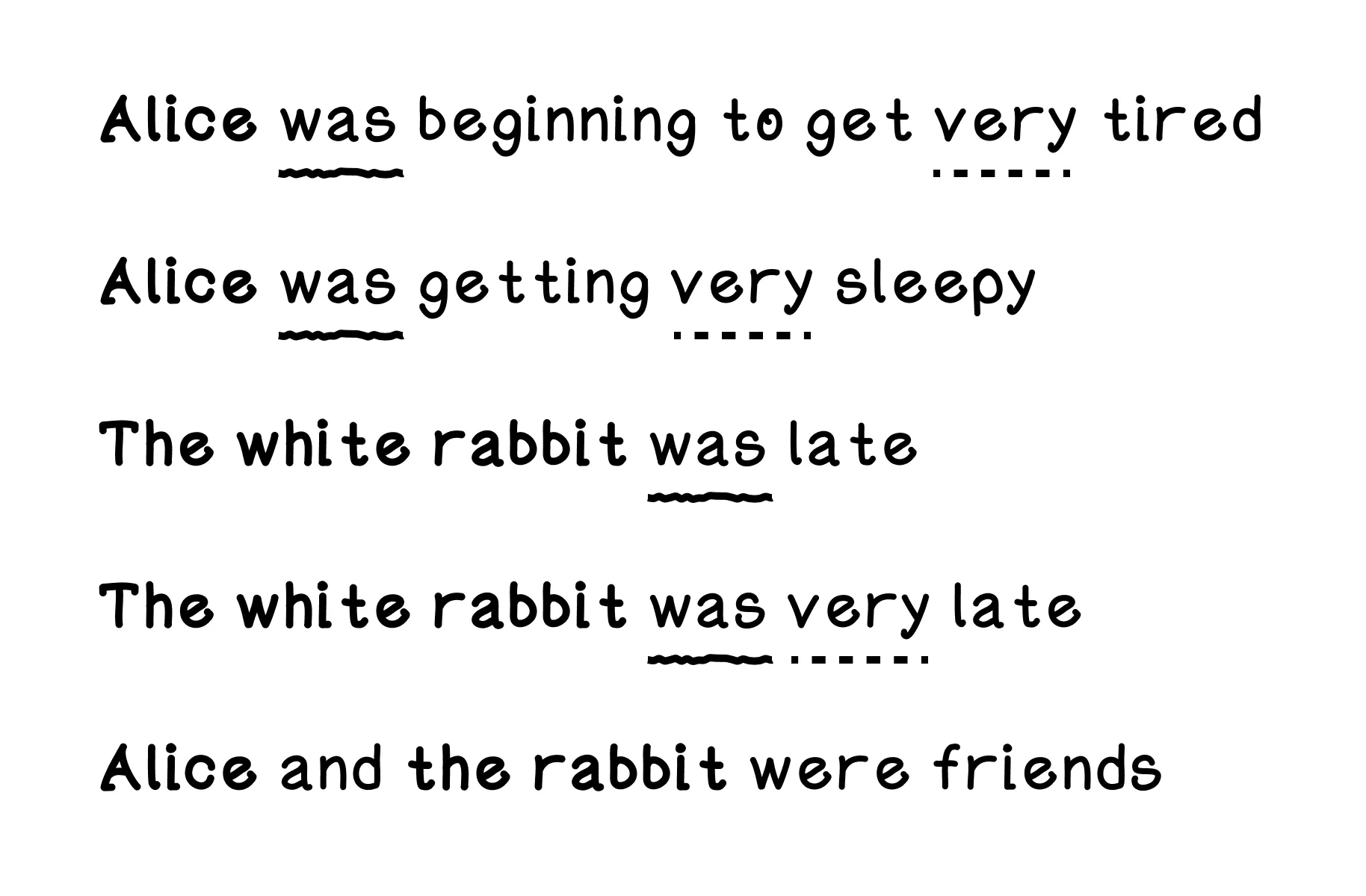

Suppose we have the following short sentences from the book Alice’s Adventures in Wonderland.

- Alice was beginning to get very tired

- Alice was getting very sleepy

- The white rabbit was late

- The white rabbit was very late

- Alice and the rabbit were friends

From simply reading these sentences, you might already recognize some patterns, like “Alice” and “The white rabbit” are always the nouns, “was” normally comes after “Alice” and after “The white rabbit”, and the adjective “very” is used a lot. It’s normal for us because our brains are pattern matching machines.

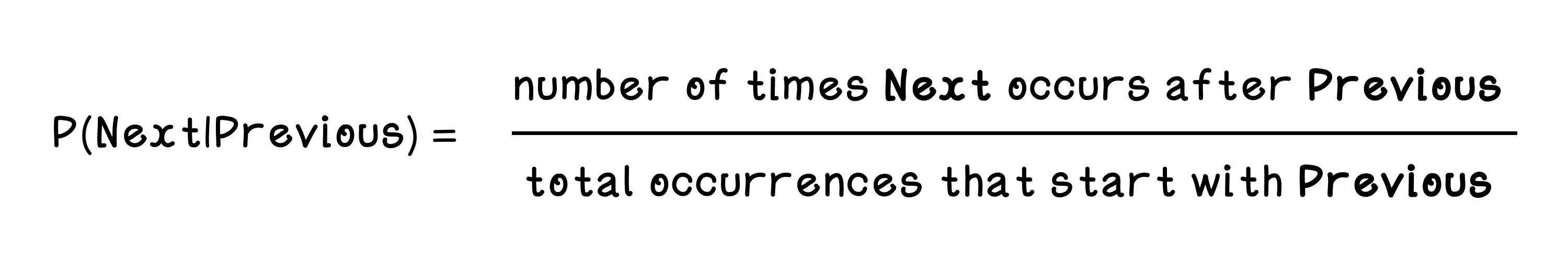

A simple concept in language models is bigrams. Bigrams are 2-word pairs such as “Alice was” or “white rabbit”. If we treat the first word as the context and the second as a possible continuation, we can build a table of probabilities from the sentences available for training. The probability is calculated by dividing the number of times a specific next word appears after a previous word by the total number of continuations for that previous word. This previous-and-next pairing is a bigram. Think of this like your phone’s autocomplete. If you type “Alice”, the model looks at its training data to see what word usually comes next. If “was” appears 90 times after “Alice”, and “ran” only appears 10 times, the model assigns a 90% probability to “was” and suggests it as the next word.

| # | Previous word | Next word | Occurrence count | Probability calculation | Probability |

|---|---|---|---|---|---|

| 1 | Alice | was | 2 | 2/3 | 0.67 |

| 2 | Alice | and | 1 | 1/3 | 0.33 |

| Alice occurrence count: 3 | |||||

| 3 | was | beginning | 1 | 1/4 | 0.25 |

| 4 | was | getting | 1 | 1/4 | 0.25 |

| 5 | was | late | 1 | 1/4 | 0.25 |

| 6 | was | very | 1 | 1/4 | 0.25 |

| was occurrence count: 4 | |||||

| 7 | beginning | to | 1 | 1/1 | 1 |

| 8 | to | get | 1 | 1/1 | 1 |

| 9 | get | very | 1 | 1/1 | 1 |

| 10 | very | tired | 1 | 1/3 | 0.33 |

| 11 | very | sleepy | 1 | 1/3 | 0.33 |

| 12 | very | late | 1 | 1/3 | 0.33 |

| very occurrence count: 3 | |||||

| 13 | getting | very | 1 | 1/1 | 1 |

| 14 | the | White | 2 | 2/3 | 0.67 |

| 15 | the | Rabbit | 1 | 1/3 | 0.33 |

| the occurrence count: 3 | |||||

| 16 | White | Rabbit | 2 | 2/2 | 1 |

| 17 | Rabbit | was | 2 | 2/3 | 0.67 |

| 18 | Rabbit | were | 1 | 1/3 | 0.33 |

| Rabbit occurrence count: 3 | |||||

| 19 | and | the | 1 | 1/1 | 1 |

| 20 | were | friends | 1 | 1/1 | 1 |

The key point is that the model does not “understand” Alice or rabbits in a human sense. It only counts which words tend to follow which other words and turns those counts into probabilities.

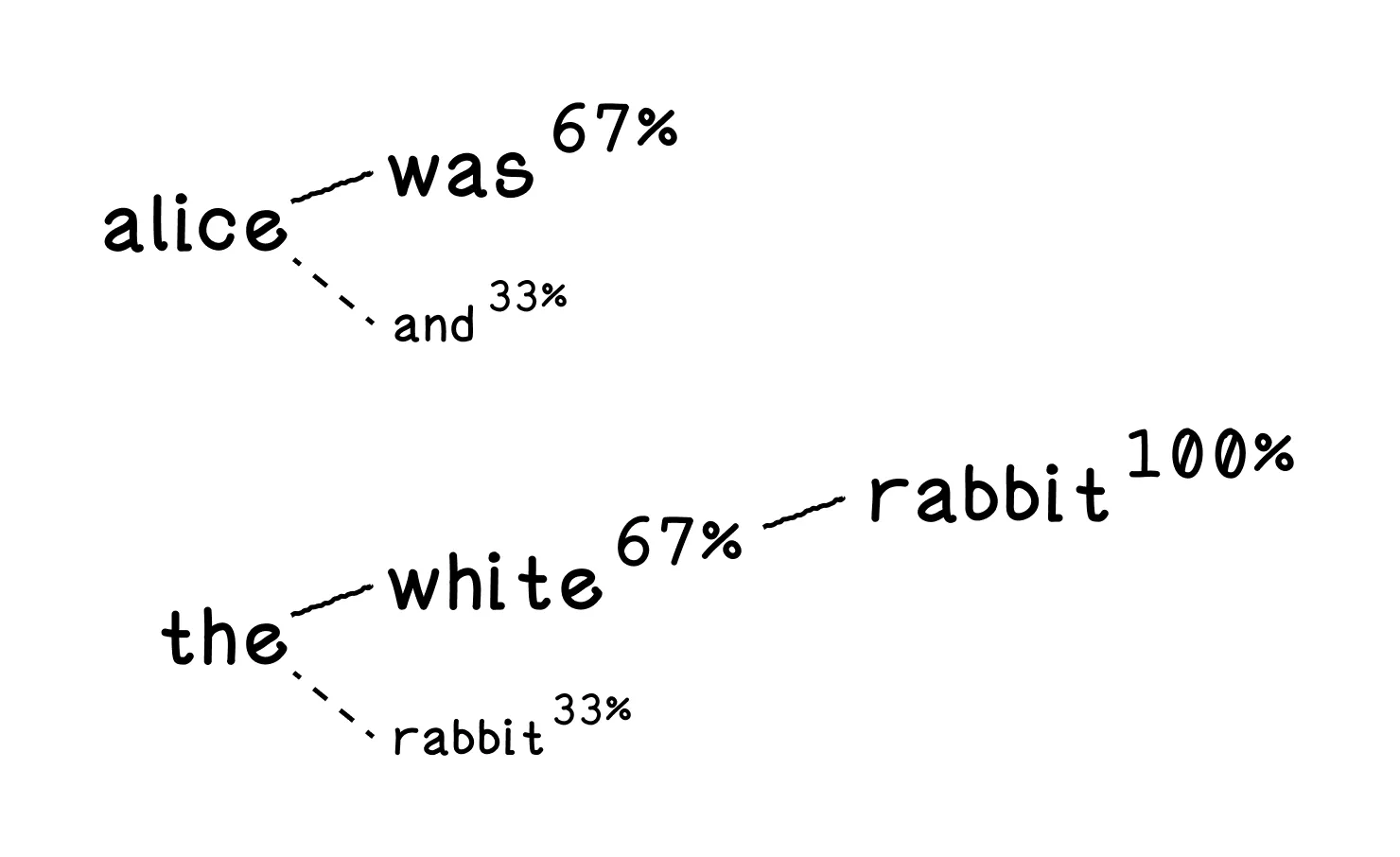

Now, using this table of probabilities, we can make predictions. Given the word “Alice”, the model has two options for the next word: “was” with a probability of 0.67, and “and” with a probability of 0.33. That means the model will most often continue with “Alice was”. Given the word “the”, the options are “White” with a probability of 0.67 and “Rabbit” with a probability of 0.33. So the completion is likely to become “the White”, and then “White” has only one continuation with a probability of 1.0: “Rabbit”. Step by step, the model builds the sentence “the White Rabbit”.

You can see how this tiny example of just 5 sentences and 29 tokens can already produce a faint version of the behavior we expect from a language model.

What the bigram model gets right

This toy captures the central loop of language modeling surprisingly well: look at the context you have, estimate the most likely next token, then repeat. That next-token prediction idea is still at the heart of modern large language models.

Why real LLMs need more context

Bigrams are useful, but they only remember one word of context. That means they break down quickly when meaning depends on longer phrases, syntax, or distant references. Real LLMs need richer representations so they can keep track of many tokens at once and reason about how they relate to one another.

Tokens, embeddings, and attention

Modern LLMs still begin with tokens, but they do not store them as simple word counts. Instead, they convert tokens into numeric vectors called embeddings, which capture relationships among words, subwords, and symbols in a continuous space. The model then uses attention mechanisms to decide which earlier tokens matter most when predicting the next one. That is a major leap beyond a bigram table: instead of only asking “what usually follows this word?”, the model can ask “which parts of the full context matter most right now?”

From toy model to transformer

That broader context handling is why transformers became so important. A transformer-based LLM can look across many tokens, model long-range dependencies, and reuse patterns learned from enormous datasets. The tiny model on this page does not do any of that. It is deliberately simple so you can see the predictive core clearly first. Once that intuition is in place, the jump to embeddings, attention, and transformers makes much more sense.

The toy below has two parts. First, run the trainer and watch it build up counts and probabilities from the sample phrases. Then switch to the tester and try constructing sentences by following the model’s next-word options. That split is useful because it lets you see both sides of the algorithm: how the model is trained and how it generates text once those probabilities exist.

As you play with it, notice where the model feels convincing and where it breaks down. It can produce locally plausible next words, but it quickly loses coherence when the context gets longer than one word. That weakness is exactly why modern LLMs need richer context handling.

Test a tiny language model

Bigram table

| Word | Next Word | Occurrence Count | Probability |

|---|

Large Language Models (LLMs) Frequently Asked Questions (FAQ)

What is a large language model?

A large language model is a system trained to predict likely sequences of words or tokens. Modern LLMs use that prediction ability to generate text, answer questions, summarize information, and follow instructions.

What is a bigram model?

A bigram model predicts the next word based only on the previous word. It is far simpler than a modern LLM, but it is still useful for understanding the core idea of next-token prediction.

Why start with a bigram model instead of a full transformer?

Because it isolates the basic learning problem. Once next-token prediction feels intuitive in a tiny model, it is much easier to understand what larger architectures are scaling up.

What does next-token prediction mean?

It means the model estimates what word or token is most likely to come after the current context. That simple objective underlies much of modern language modeling.

Why do real language models need more context than a bigram model?

Language depends on meaning carried across many words, sentences, and ideas, not just a single previous token. That is why modern LLMs use architectures that can pay attention to much longer context windows.

What is a token in language modeling?

A token is a unit the model processes, such as a word, subword, or symbol. Real LLMs usually operate on token sequences rather than raw characters or full sentences.

Why are probabilities important in language models?

Probabilities let the model rank possible next tokens instead of making only one rigid choice. That is what makes generation flexible and allows more than one plausible continuation.

What are embeddings in an LLM?

Embeddings are learned numeric representations of tokens that place related words or pieces of text into meaningful geometric space. They help the model capture relationships beyond simple counts.

What is attention in a transformer?

Attention is the mechanism that lets the model weigh different parts of the context when predicting the next token. It is one of the major reasons modern LLMs can use long-range context effectively.

What does the chapter demo help you understand?

It makes language modeling tangible by showing how counts turn into probabilities and how those probabilities drive generation. You can see the predictive loop in a stripped-down form.

Are LLMs just autocomplete?

Autocomplete is one visible behavior, but modern LLMs are much more general. Because they model language patterns at scale, they can support summarization, reasoning-like behavior, coding help, translation, and more.